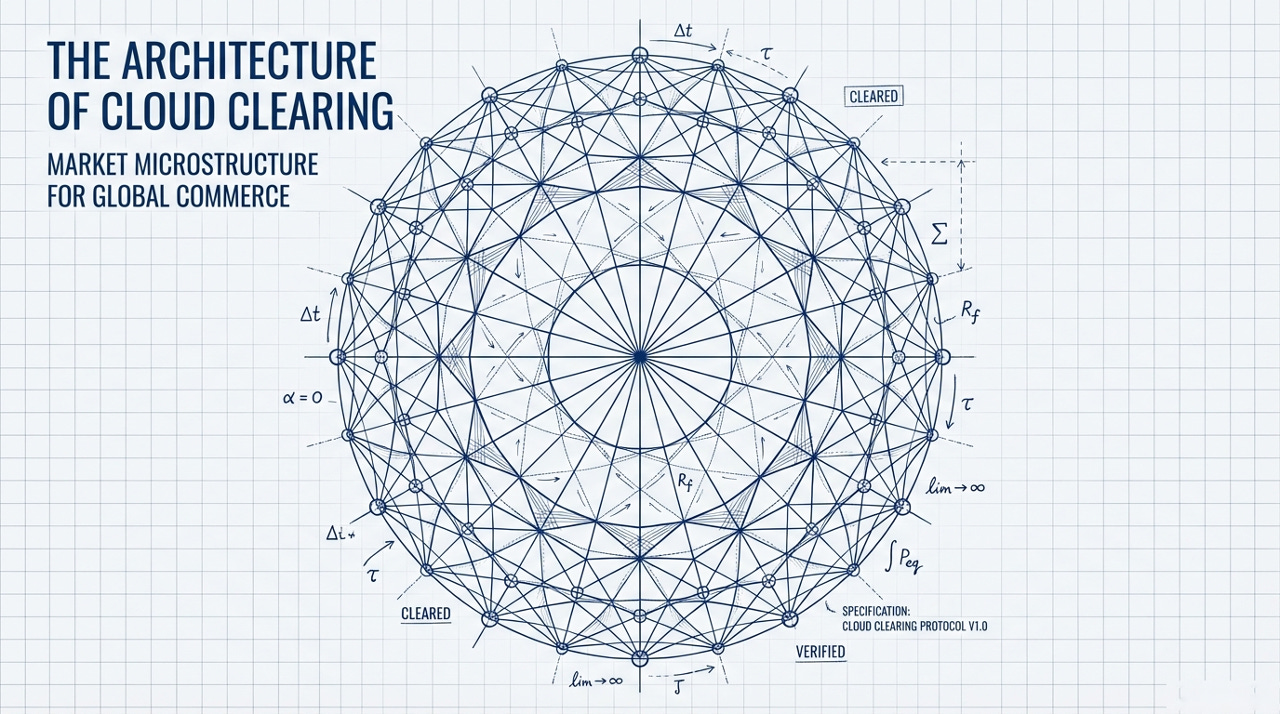

Cloud Clearing

Market Microstructure for Global Commerce

A framework for applying dealer economics to payment settlement, and what tokenized money markets could mean for the future of clearing.

The company of the future is default global — hiring across borders from day one, selling into dozens of markets simultaneously, managing multi-entity structures that span jurisdictions. Software transcends borders effortlessly; localizing it can be as simple as translating the language. But finance remains stubbornly local, and clearing and settlement — the infrastructure that actually moves and settles money — is hyper-local: purpose-built for specific payment types, specific jurisdictions, specific settlement cycles.

This mismatch between globally operating companies and locally siloed settlement is the hidden tax on global commerce. What follows uses the tools of market microstructure — dealer economics, money market hierarchy, transaction cost theory — to explain why clearing infrastructure is fragmented the way it is, and what structural conditions would need to hold for it to be unified. The answer turns on a property of commercial payment flows that has no analog in financial markets: they carry no information.

1. The Dealer Function

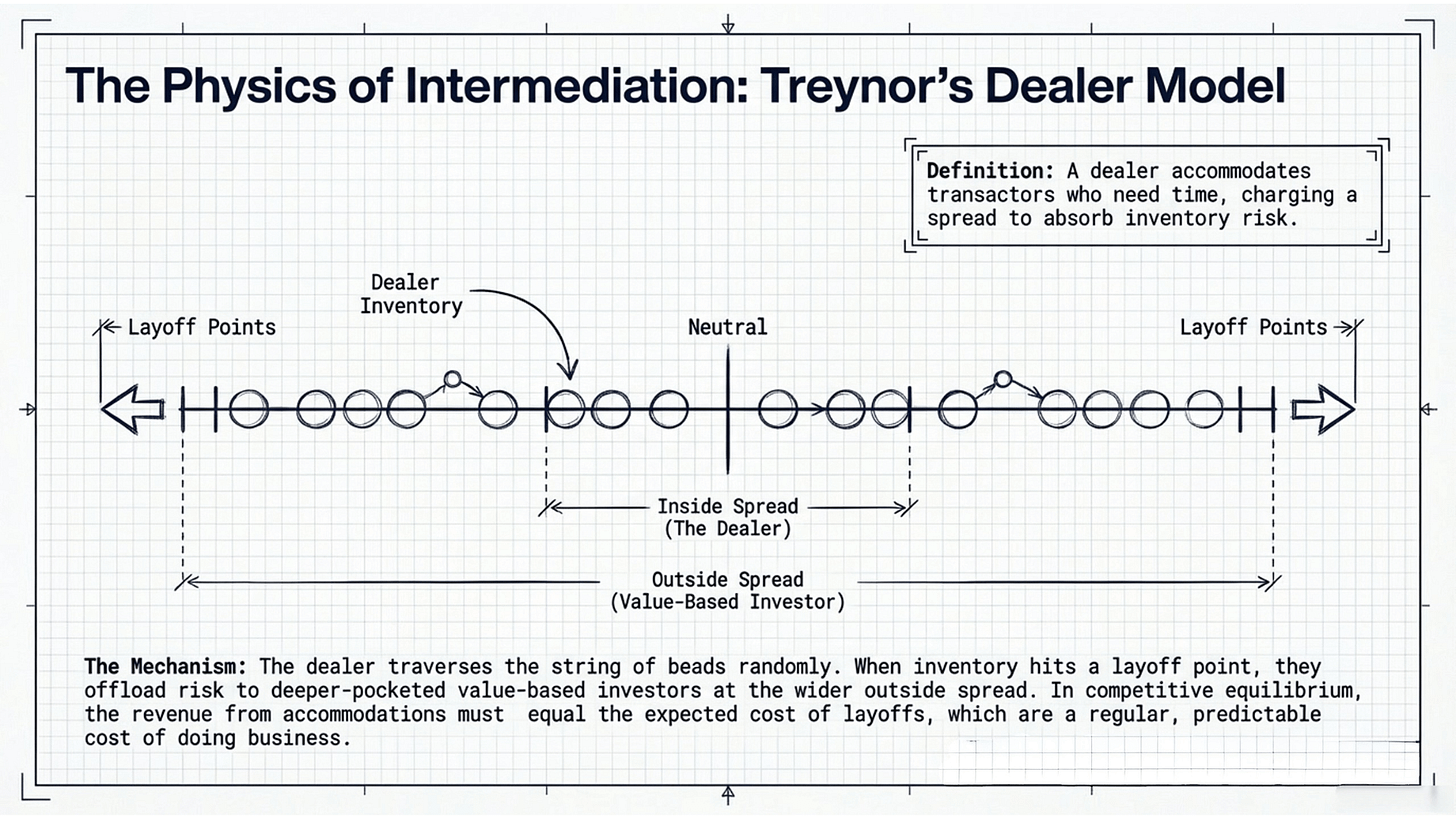

In 1987, Jack Treynor published a deceptively simple model of how financial markets actually work. The core idea: a dealer is someone who accommodates transactors to whom time is important, in exchange for charging buyers a higher price than he pays sellers. The dealer carries inventory — absorbing selling pressure by going long, absorbing buying pressure by going short — and earns the spread between his bid and ask for this service.

But the dealer is not the only market-maker. Treynor identifies a second, deeper layer: the value-based investor, who also stands ready to buy and sell, but at a much wider spread. The value-based investor has more capital, a longer time horizon, and a greater tolerance for risk. When the dealer accumulates too much inventory — when his position reaches the maximum he’s willing to hold — he “lays off” to the value-based investor, absorbing a loss equal to the difference between the inside spread (his own) and the outside spread (the value-based investor’s).

This creates a simple but powerful structure. The dealer’s spread is determined by the cost of laying off: in competitive equilibrium, the revenue from accommodations must equal the expected cost of layoffs. The dealer’s price moves with his position — when he’s long, he shades his price down to attract buyers; when he’s short, he shades up to attract sellers. And the whole system is anchored by the value-based investor, whose assessment of fundamental value sets the outside spread within which the dealer operates.

Treynor models the dealer’s inventory as a string of beads — discrete positions that the dealer traverses randomly as buy and sell orders arrive. At the ends of the string are the layoff points: positions beyond which the dealer cannot go without offloading to the value-based investor. Layoffs are not rare catastrophes — they are a regular, predictable cost of doing business, and the dealer’s spread must be set to cover them.

Two forces threaten the dealer. The first is random position accumulation: a long run of sells (or buys) that pushes him toward his layoff point through pure bad luck. The second is adverse selection — “getting bagged” by informed traders whose orders carry information about future prices. When an informed seller hits the dealer’s bid, the dealer is buying an asset whose value is about to decline. This is the deeper risk, and the one that determines the outside spread. Value-based investors must set their spread wide enough that gains from accommodating uninformed, liquidity-motivated flow offset losses from trading against informed flow.

This framework — dealers intermediating time-sensitive flow, managing inventory along a string of beads, laying off to deeper-pocketed value-based investors at the outside spread — is the microstructure of every liquid market. But Treynor wrote about securities. What happens when we apply this lens to money?

2. Money as a Dealer Function

Perry Mehrling, building explicitly on Treynor, extends the dealer model to the monetary system itself. In Mehrling’s “Money View,” banks are not primarily lenders — they are dealers in money, specifically in term funding. A bank’s core function is to accommodate depositors who want liquidity (short-term, on-demand access to funds) and borrowers who want time (long-term, stable financing). The bank earns the spread between the rate it pays depositors and the rate it charges borrowers — the net interest margin — which is the banking analog of the dealer’s inside spread. Increasingly, banks perform this dealer function not just through traditional deposits and lending but through repo and reverse repo (lending and borrowing against collateral), through FX spot and swap markets (dealing in the exchange rate between national monies), and through commercial paper and CD issuance (short-duration funding instruments). Each of these is a dealer function across a different asset class, each with its own spread structure.

Like Treynor’s securities dealer, the bank carries inventory risk. Its “position” is the mismatch between the duration of its assets (long-term loans) and its liabilities (short-term deposits). And like the securities dealer, when this mismatch becomes too large — when too many depositors want their money back at once, or when the bank cannot roll its wholesale funding — the bank must “lay off” to a deeper source of liquidity.

In the domestic monetary system, that deeper source is the central bank. Mehrling’s key insight is that the central bank functions as the dealer of last resort: its outside spread — the discount window rate, the standing repo facility rate — anchors the entire hierarchy of money market spreads. Primary dealers quote inside spreads on government securities, banking on their ability to lay off to the Fed. Banks quote inside spreads on deposits and loans, banking on their ability to access the discount window. The whole system is a nested hierarchy of dealer functions, each layer intermediating between time-sensitive transactors at a tighter spread than the layer above, and each layer ultimately backstopped by the central bank’s willingness to absorb position at the outside spread.

Mehrling’s course on Money and Banking — one of the most influential treatments of monetary economics in the past two decades — structures this hierarchy through what he calls the “Four Prices of Money”:

par (the exchange rate between different forms of money),

interest (the price of borrowing),

the exchange rate (the price of foreign money), and

the price level (the price of goods in terms of money).

Each price is managed by a different set of institutions: par by the banking system, interest by the money market, exchange rates by the FX market, and the price level by the central bank through monetary policy.

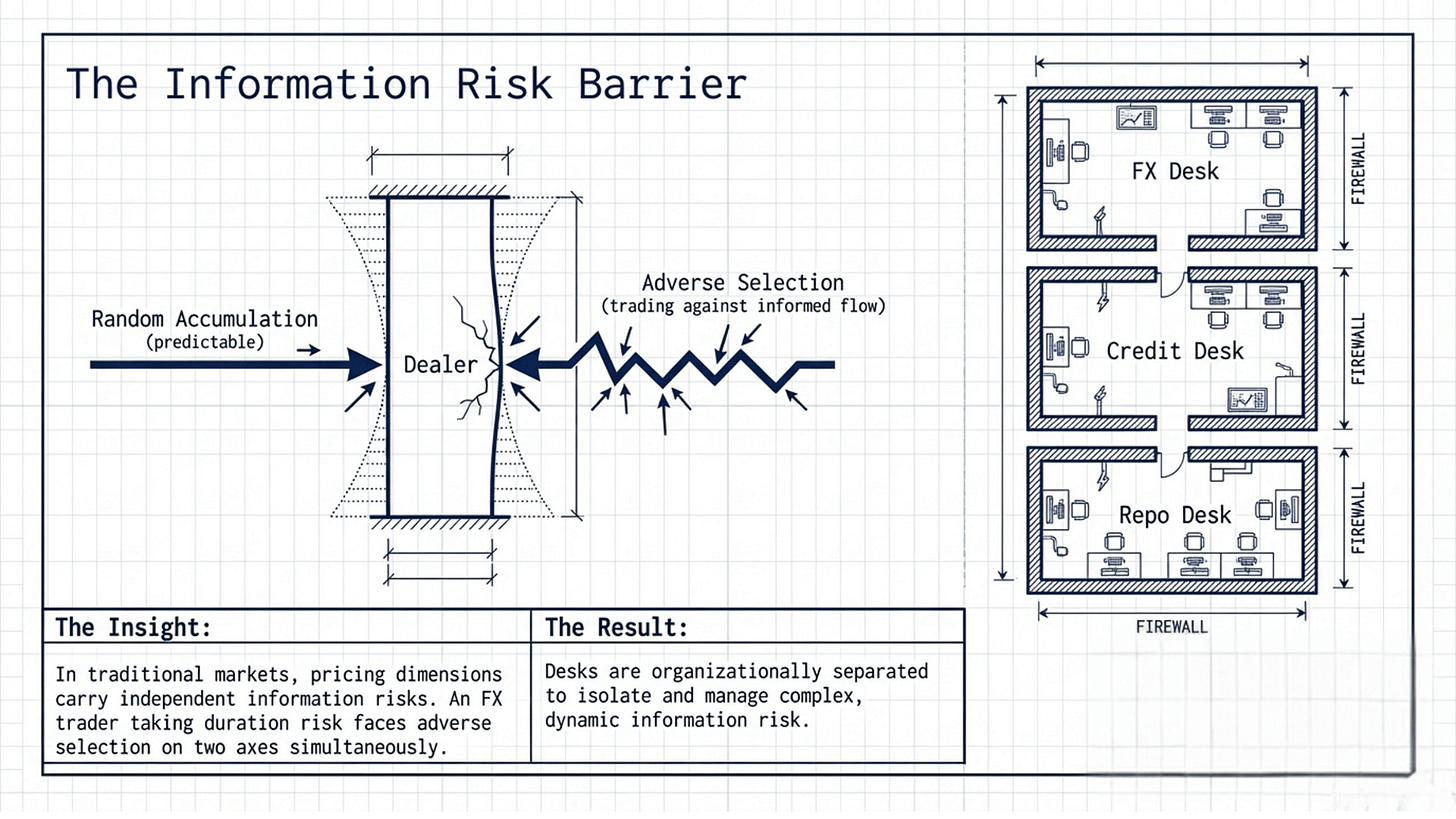

Critically, these prices are managed by separate dealer functions. The repo desk manages duration risk through repurchase agreements. The FX desk manages currency risk through spot, swap, and forward transactions. The credit desk manages counterparty risk through credit default swaps and lending standards. Each desk is its own string of beads, with its own position limits, its own layoff points, and its own spread economics. They interact in practice — an FX swap has both a currency component and an interest rate component — but they are optimized independently. No single venue jointly optimizes across all of Mehrling’s four prices simultaneously.

This separation is not a failure of imagination. It is a consequence of information asymmetry. In securities and money markets, each pricing dimension carries its own information risk. An FX trader who also takes duration risk is exposed to adverse selection on two axes simultaneously. The organizational separation of dealing desks is a risk management strategy: isolate each information risk to the desk best equipped to assess it.

The Prices of Money for Commercial Settlement

Mehrling’s four prices are the right framework for traditional money markets. But they are not the complete set. When we move to commercial settlement, the price level drops out — settlement horizons of seconds to hours are too short for inflation to matter. Two new prices emerge: liquidity value (the system-wide benefit of netting compression — implicit in money markets but the dominant source of economic gain in commercial settlement) and operational cost (per-transaction rail overhead — negligible for a $500 million repo but first-order for a $50 consumer payment).

Three prices are shared. The domain determines which of the remaining four bind. The seven prices are the union — different domains of financial intermediation make different prices visible. Existing payment infrastructure has never needed to explicitly price liquidity or operational cost; they’ve been bundled into opaque fee structures — interchange, network assessments, correspondent banking charges — rather than decomposed and independently optimized.

But what if there were a class of financial flows where none of these dimensions carried information risk?

3. Payment Systems as Fragmented Dealer Desks

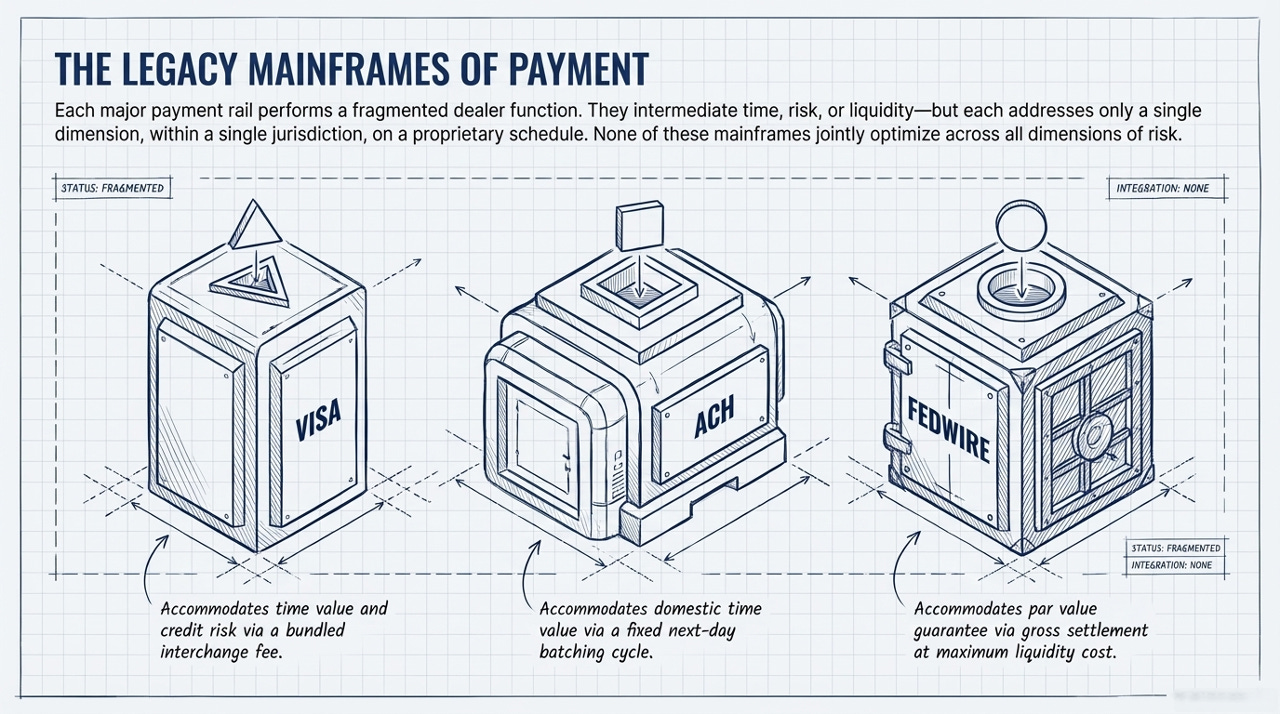

Consider the major payment rails through the lens of Treynor’s dealer model. Each one performs a dealer-like intermediation function — accommodating time-sensitive transactors, carrying inventory, earning a spread — but each addresses only one or two pricing dimensions, operates within a single jurisdiction, and runs on its own schedule.

Card networks like Visa and Mastercard net transactions across their member banks and settle the residual. The interchange fee is a composite instrument: it compensates the issuing bank for the time value of extending credit to the cardholder (the interest rate component) and for the counterparty risk of cardholder default (the par value component). Interchange is, in Treynor’s terms, a bundled inside spread — the card network is a dealer intermediating between merchants who want immediate assurance of payment and cardholders who want deferred settlement.

ACH processes batch domestic transfers on its own netting cycle — typically next-day. Its implicit spread is the float: the value of funds held between submission and settlement. For the originating bank, this float is revenue. For the recipient, it is cost. ACH is a dealer in time value, operating on a fixed, static schedule with no dynamic optimization.

Fedwire settles each transaction individually and immediately in central bank reserves. It is the ultimate par value guarantee — settlement in Fedwire dollars eliminates counterparty risk entirely. In Treynor’s framework, Fedwire is the value-based investor of last resort for domestic dollar payments: when all netting fails, when all commercial intermediation is exhausted, you settle in Fed money. The cost of doing so — the liquidity required to fund the gross payment — is the outside spread of the domestic payment system.

CHIPS, CLS, and correspondent banking networks perform analogous functions for large-value interbank netting, FX settlement risk, and cross-border credit intermediation respectively — each a separate dealer desk managing a single dimension.

Each of these systems is a mainframe — vertically integrated, purpose-built, expensive to access, and operating on its own proprietary protocols. Each has its own membership requirements, its own operating hours, its own fee structure. A bank that wants to participate in all of them must maintain separate connections, separate liquidity pools, separate operational teams, and separate reconciliation processes for each.

And none of them optimizes across all pricing dimensions simultaneously. Visa nets card transactions and earns significant revenue on cross-border FX conversion — but prices these separately rather than jointly optimizing netting and currency exposure. ACH handles domestic batching but doesn’t price the time value of settlement acceleration. Fedwire guarantees par but at maximum liquidity cost. CLS reduces FX settlement risk through multilateral netting and payment-versus-payment mechanics — compressing funding requirements by roughly 99% — but operates only for FX, without optimizing across the broader obligation graph.

This fragmentation was manageable when most commerce settled through one or two dominant rails per geography. It is becoming untenable because of three reinforcing structural shifts.

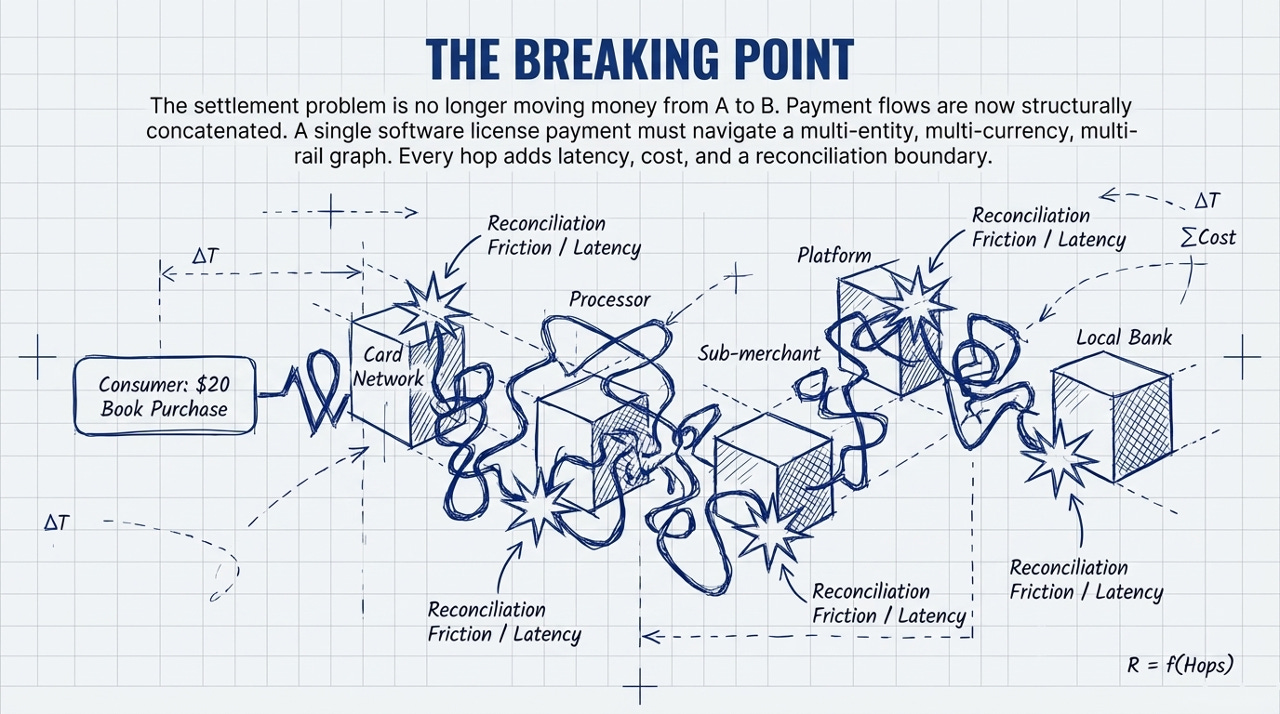

Payment flows have become concatenated: a single commercial transaction now passes through multiple intermediaries — card network, processor, platform, sub-merchant, bank — each adding latency, cost, and a reconciliation boundary. A $20 book purchase might traverse five separate systems before the author receives payment.

They have become fragmented: the same merchant receives payments through cards, real-time payment systems, ACH, buy-now-pay-later providers, and increasingly tokenized rails. Each method has different settlement timing, cost structure, counterparty risk, and reconciliation requirements. No single rail sees the whole picture.

And they have become globalized — not merely cross-border, but structurally multi-jurisdictional. A software company collecting license revenue in Europe must allocate it across an IP holding entity in Ireland, a local booking entity in Germany, and a treasury in-house bank in the US — each with its own regulatory, tax, and settlement requirements. A global platform must accept payments in dozens of local methods (Pix in Brazil, iDEAL in the Netherlands, UPI in India) while managing payouts to merchants across its worldwide base. The settlement problem is no longer “move money from A to B.” It is “optimize flows across a multi-entity, multi-currency, multi-rail, multi-jurisdictional graph” — and no existing mainframe system was designed for that.

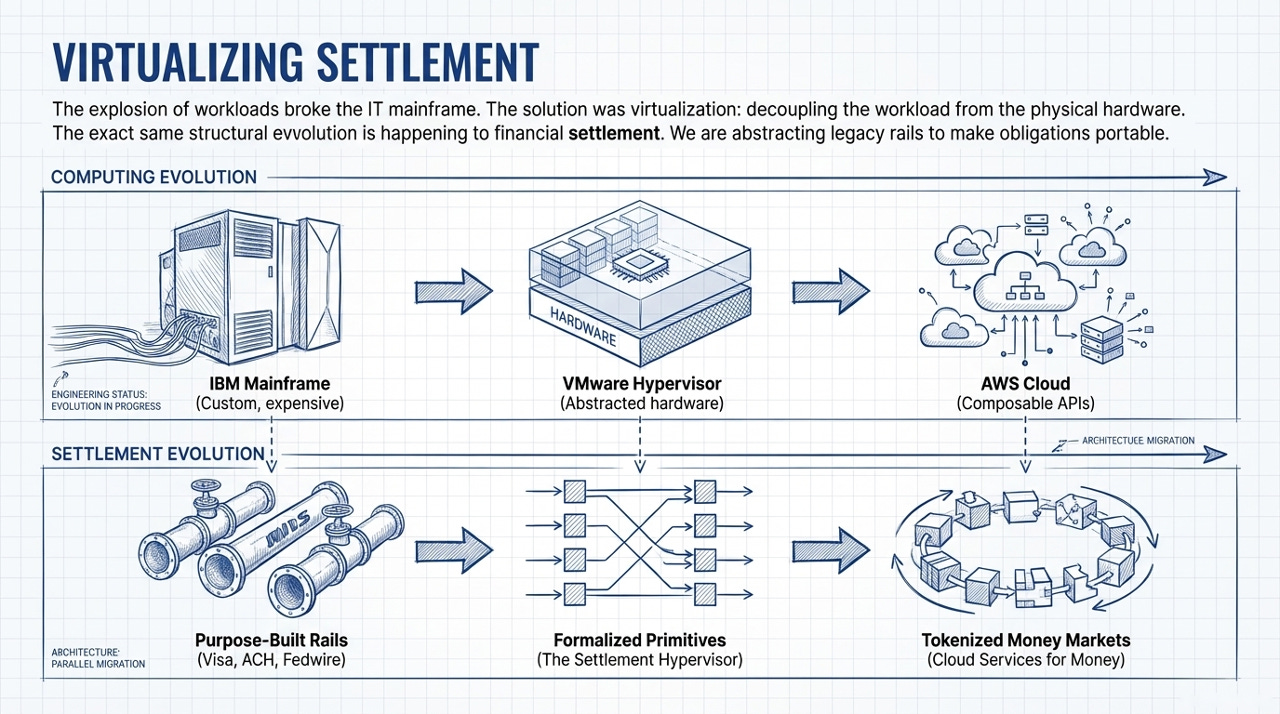

This concatenation, fragmentation, and globalization is the payment system’s equivalent of what happened to corporate IT in the early 2000s: the number and diversity of workloads exploded beyond what purpose-built mainframes could efficiently manage. The response in computing was virtualization, then cloud. The question is whether the same transformation is possible for settlement.

4. Zero Alpha and the Small Composite Bead

The answer depends on a structural property of commercial payment flows that has no analog in securities or money markets.

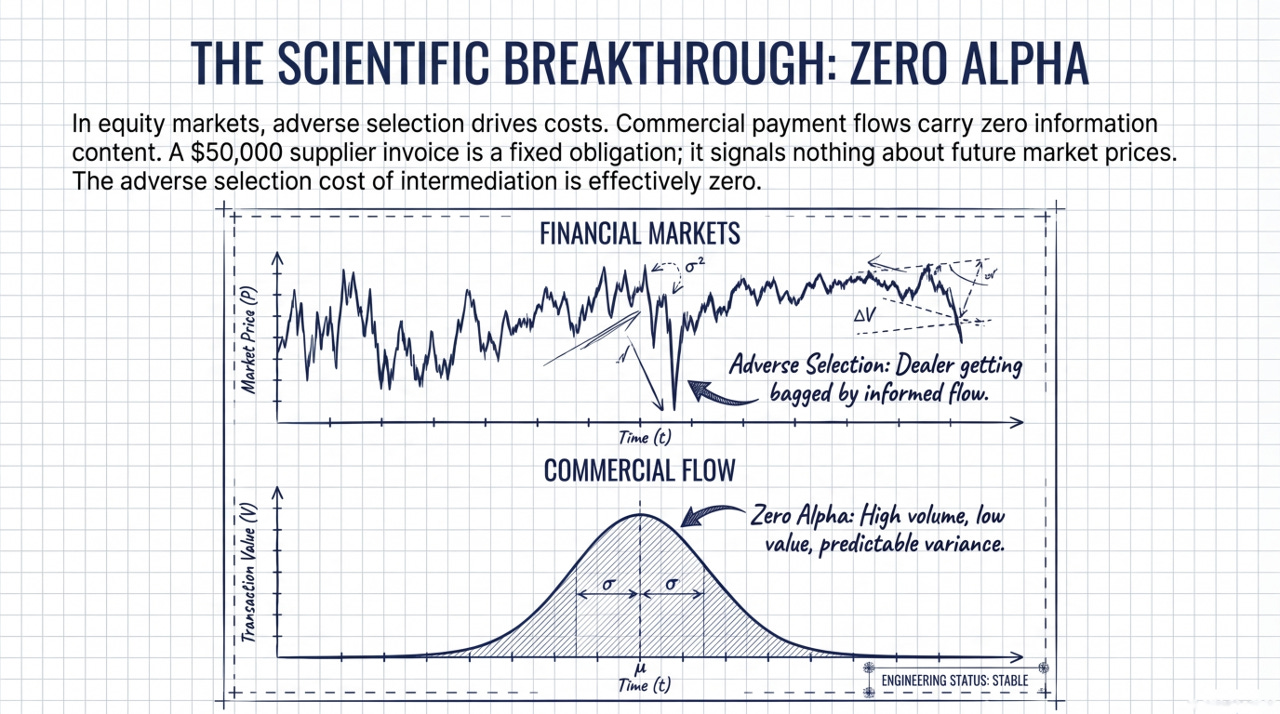

In equity markets, the central problem of market microstructure is adverse selection. When a dealer receives an order, she doesn’t know whether the counterparty is an uninformed liquidity trader or an informed trader who knows something she doesn’t. If the latter, the dealer is — in Treynor’s memorable phrase — “getting bagged”: buying an asset whose value is about to fall, or selling one whose value is about to rise. This information risk is what determines the outside spread and, through it, the entire cost structure of intermediation.

Retail equity flow — the orders placed by individual investors without private information — is prized by market makers precisely because it is uninformed. It carries no adverse selection cost, which is why dealers pay brokers for the right to trade against it.

Commercial payment flows are structurally analogous to retail equity flow — not because the participants are unsophisticated, but because the obligations themselves carry no information content. A $50,000 supplier payment is a fixed obligation: its amount was determined when the invoice was issued. It tells the intermediary nothing about future supplier payments, future market prices, or future exchange rates. Unlike a securities trade, a payment instruction cannot “move against” the party that processes it. The obligation is what it is.

This is the Zero Alpha thesis: commercial payment flows carry no information content with respect to timing, making the adverse selection cost of intermediation effectively zero.

The implications for dealer economics are profound. In Treynor’s framework, the dealer faces two risks: random position accumulation and adverse selection. Zero Alpha eliminates the second entirely. An intermediary accumulating commercial payment obligations in a netting buffer faces only the first risk — the random walk along the string of beads toward a layoff point. And the characteristics of commercial flow make even this residual risk structurally tame.

Consider the nature of the beads themselves. Consumer-to-business and business-to-business commercial flows are high volume and low value per transaction. A payment processor intermediating merchant payouts handles millions of transactions daily, each individually small. By the Central Limit Theorem, the aggregate net position — the sum of all obligations in the netting buffer — converges to a normal distribution. The variance of the aggregate is predictable and well-behaved. Unlike equity market making, where a single large informed order can push the dealer from neutral to maximum position in one move, no individual commercial payment creates meaningful position risk. The random walk along the string of beads takes small steps.

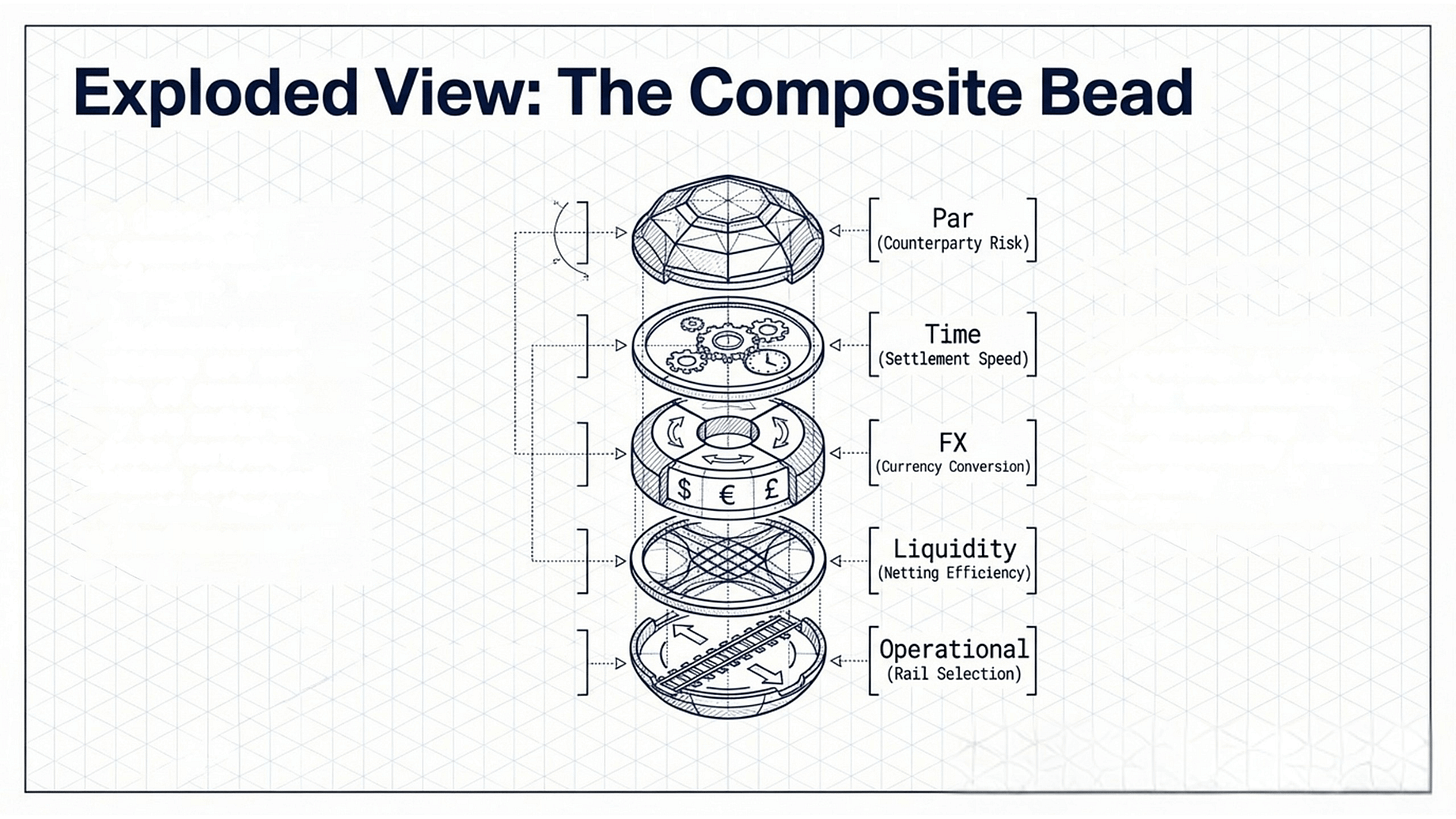

But the beads themselves are also different from those in securities or money markets. An obligation in a commercial payment graph has simultaneous exposure across multiple pricing dimensions: par value (counterparty risk), time value (settlement speed), FX value (currency conversion), liquidity value (netting efficiency), and operational cost (rail selection). In Treynor’s original model, the bead is a scalar — the dealer’s net position in a single security. In Mehrling’s money market extension, each pricing dimension has its own string of beads, managed by a separate desk. In a formalized settlement protocol, the beads are composite: multidimensional positions that can be jointly optimized.

This matters because position buildup along one dimension may be offset by favorable positioning along another. A netting buffer that is accumulating FX exposure in one currency pair may simultaneously be reducing liquidity requirements through improved compression. The probability of hitting layoff points on all dimensions simultaneously — the multidimensional equivalent of the dealer blowup — is much lower than in any single-dimension model.

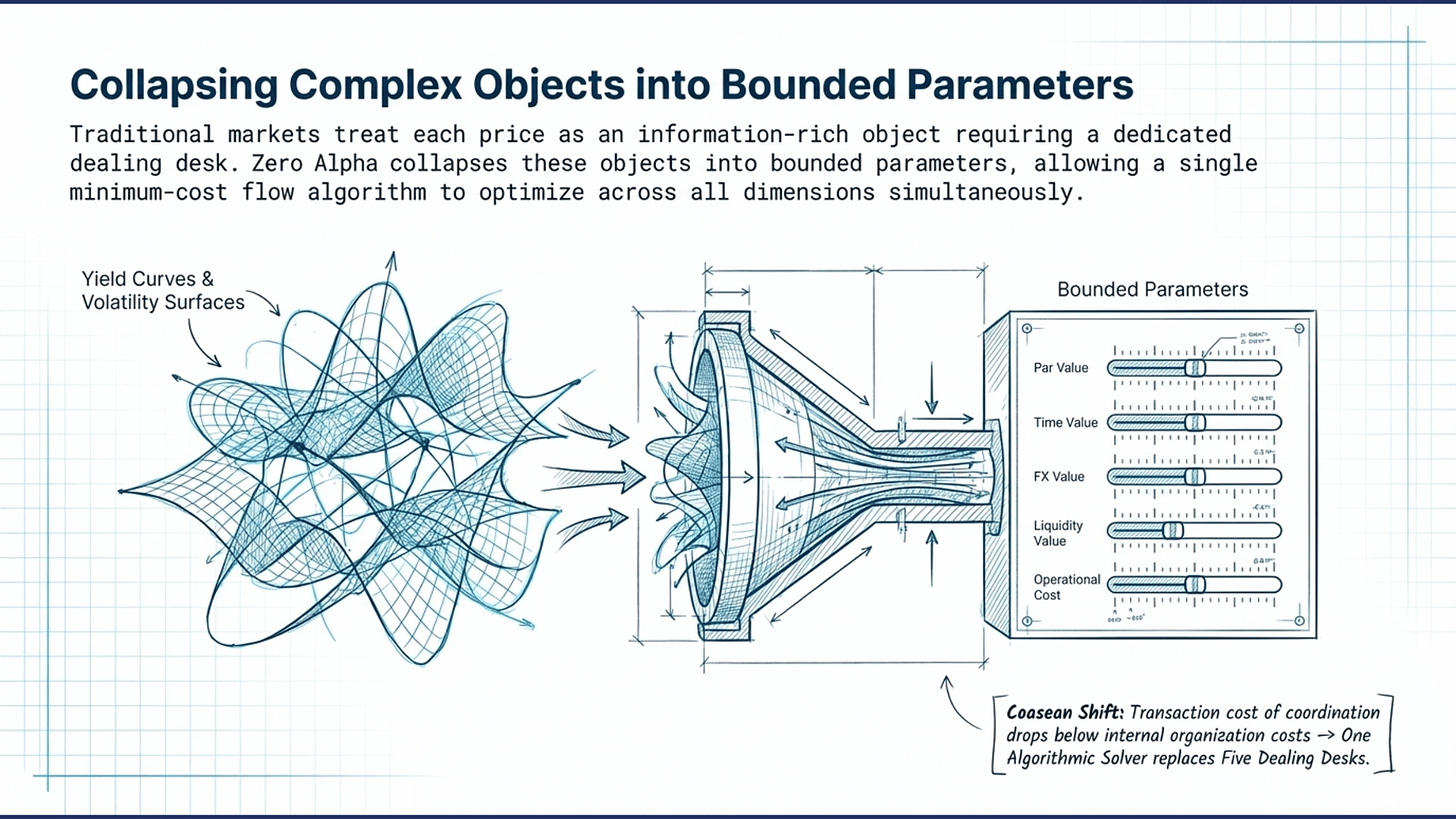

But cross-dimensional offsetting is not the only reason composite beads are tractable. There is a deeper structural point: in commercial settlement, each pricing dimension behaves as a parameter with a relatively bounded range, rather than a rich object with its own internal dynamics that must be managed independently.

Consider the difference. In traditional money markets, each of Mehrling’s prices is a high-dimensional object in its own right: the interest rate is an entire yield curve with term structure, convexity, and basis risk; FX is a surface of spot, forward, and implied volatility across dozens of currency pairs; credit risk is a term structure of default probabilities with correlation dynamics across counterparties and sectors. Each is rich enough, dynamic enough, and information-laden enough to require a dedicated team with specialized models. The organizational separation of dealing desks is not a convention — it is a response to the irreducible complexity of each pricing dimension as an independent information domain.

In commercial settlement, Zero Alpha collapses these objects into parameters. Par value operates in a narrow band among licensed institutions — the question is not “probability of default over two years?” but “JPMorgan tokenized deposit or well-capitalized stablecoin?” Time value reduces to the float cost of a delay measured in seconds. FX value involves the spread on a handful of major pairs over horizons where the spot rate barely moves. Liquidity value and operational cost are functions of network topology and fee schedules — statistical and administrative inputs, not market prices.

When each dimension collapses from object to parameter, the optimization problem changes category. Five separate desks managing five complex objects gives way to a single objective function with five bounded parameters that a Minimum-Cost Flow algorithm can jointly optimize — an approach shown by Fleischman and Dini (2021) to produce provably optimal netting solutions in trade credit networks, and one that extends naturally to the multidimensional settlement problem described here.

This is, in a formal sense, why composite beads are computationally and organizationally feasible for commercial settlement in a way they never could be for securities or money markets. The absence of information content doesn’t just eliminate adverse selection — it simplifies the pricing landscape from a set of objects requiring specialized management to a set of parameters amenable to joint optimization. The dealer in commercial settlement doesn’t need five desks. It needs one solver.

And even when layoff occurs — when accumulated obligations must settle gross through existing rails because netting cannot fully resolve them — the wrong-way risk is bounded. In equity markets, the dealer lays off to a value-based investor whose price may have moved sharply against the dealer’s position. In commercial settlement, the “layoff” is settling through Fedwire, or ACH, or a card network — and the cost of doing so is expensive but predictable. Rail fees don’t gap against you the way equity prices do. The outside spread of the commercial payment system is wide (multiple intermediary fees, FX spreads, float costs) but stable. The dealer in commercial settlement knows what his layoffs will cost before he takes the position.

Small beads. Composite dimensions. Bounded wrong-way risk. Zero information content. These structural properties create the conditions for a fundamentally different market microstructure — one where intermediation can operate at frequencies and transaction sizes that are impossible in markets where adverse selection is the dominant cost.

5. Cloud Clearing: Virtualizing Settlement

If existing payment rails are mainframes — vertically integrated, purpose-built, expensive to access — then the question becomes: what does cloud computing look like for settlement?

The analogy is more than metaphorical. The structural parallels are precise.

The mainframe era. In computing, the mainframe model worked when organizations had a small number of large, well-defined workloads. IBM built purpose-specific machines optimized for batch processing, transaction handling, or scientific computation. If you wanted serious compute, you bought a mainframe, hired a team to run it, and built your applications on its proprietary stack. Access was expensive, integration was custom, and each machine handled one workload well.

Payment settlement infrastructure follows the same pattern. Each major rail — Visa, ACH, Fedwire, CLS — is a mainframe optimized for a specific workload (card netting, domestic batch, gross settlement, FX risk elimination). Access requires expensive memberships, custom integrations, and dedicated operational teams. Each handles its workload well. None was designed for a world where a single transaction traverses five systems and a single merchant receives payments through six different methods.

Virtualization. The first step away from mainframes was the hypervisor: software that abstracted physical hardware into virtual machines, allowing multiple workloads to share the same infrastructure. VMware didn’t replace physical servers. It made workloads portable — decoupled from the specific hardware they ran on, allocable dynamically based on need.

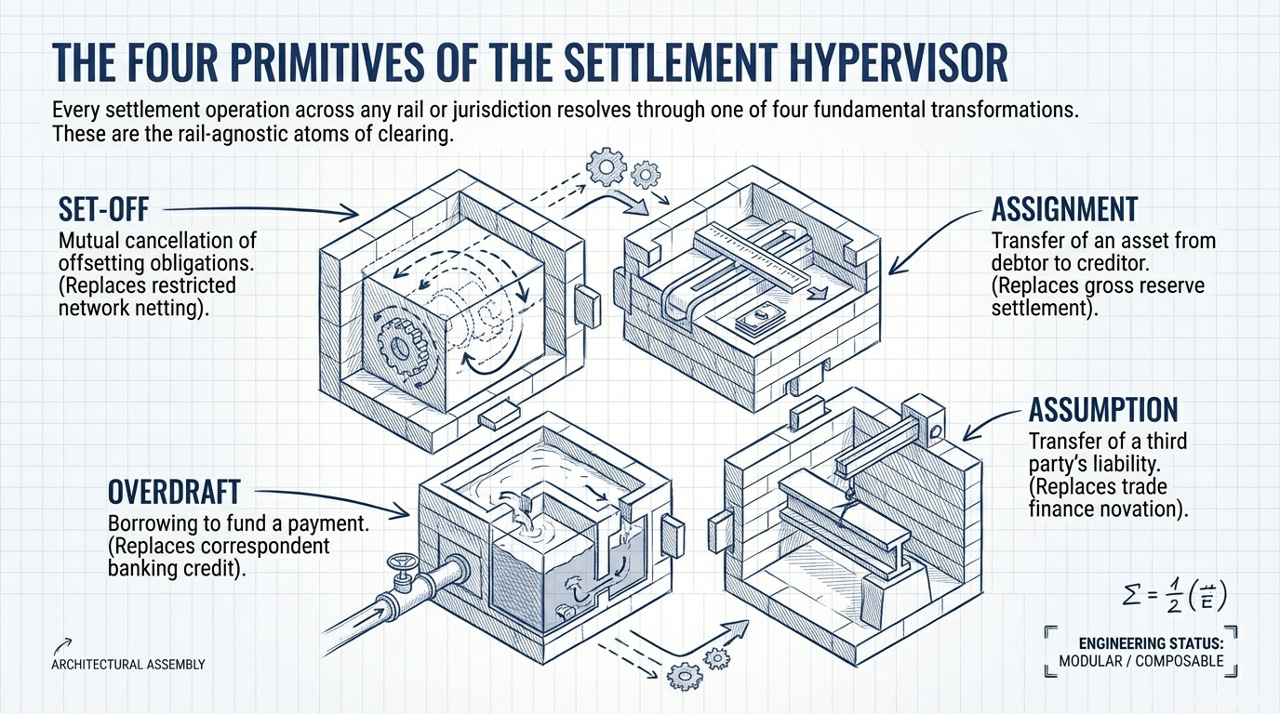

The equivalent for settlement is formalizing the operations that payment systems perform into standardized, composable primitives. Every settlement operation, regardless of rail or jurisdiction, resolves through one of four fundamental transformations: mutual cancellation of offsetting obligations (set-off), transfer of an asset from debtor to creditor (assignment), borrowing to fund a payment (overdraft), or transfer of a third party’s liability (assumption). These are the atoms of settlement, and they are rail-agnostic. Visa’s netting is set-off on a restricted graph. Fedwire settlement is assignment of central bank reserves. Correspondent banking credit is overdraft. Trade finance novation is assumption.

Formalizing these primitives — defining them precisely, making them composable, pricing them across all relevant dimensions — is the settlement hypervisor. It doesn’t replace existing rails. It abstracts them, making obligations portable across whatever underlying infrastructure is cheapest for a given transaction’s risk, urgency, and cost profile. And just as cloud computing succeeded because virtualization collapsed complex hardware resources — each previously requiring a dedicated specialist — into parameterized API calls (CPU cores, gigabytes of RAM, network bandwidth as numbers you specify on demand), the settlement hypervisor succeeds because Zero Alpha collapses each pricing dimension from a complex object requiring a dedicated desk into a bounded parameter that a solver can optimize jointly.

Cloud services. The second step was the cloud itself: standardized, composable, on-demand services (compute, storage, networking) available through uniform APIs at any scale. AWS didn’t just make existing workloads cheaper. It enabled entirely new categories of application — SaaS, mobile-first, real-time analytics — that couldn’t exist in the mainframe model, because the minimum viable infrastructure investment was too high.

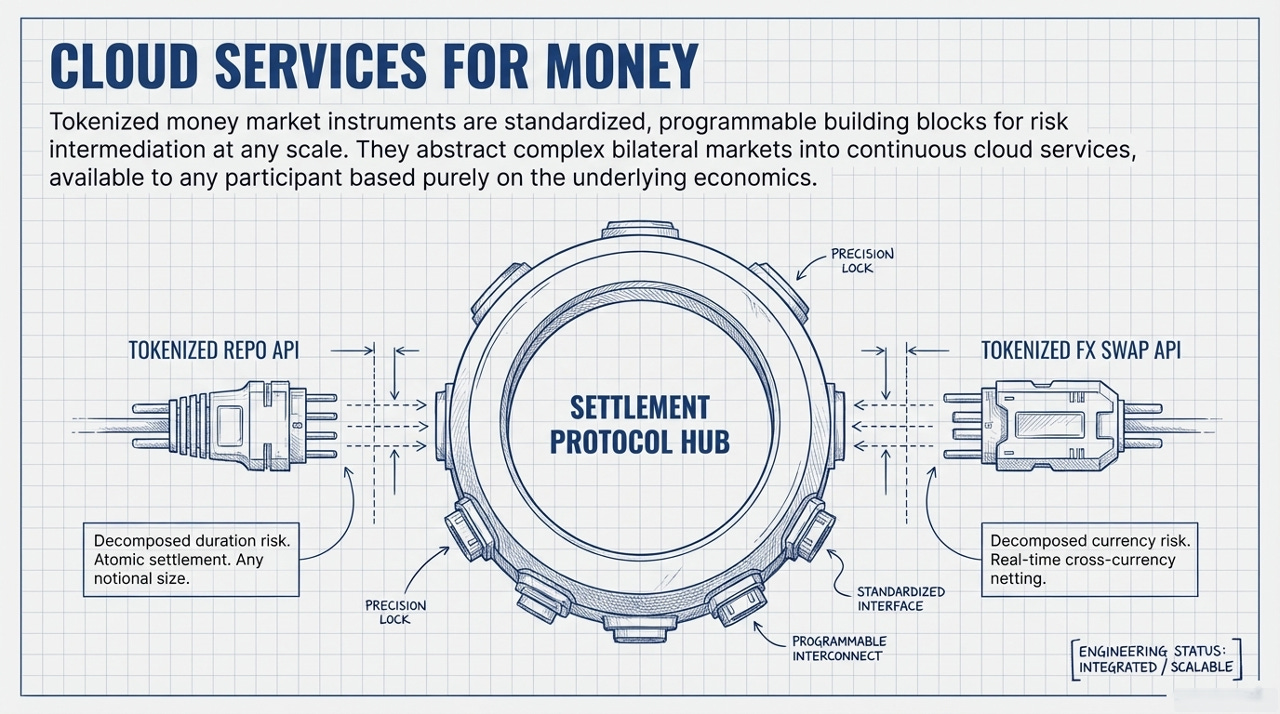

The equivalent for settlement is tokenized money market instruments: the standardized, composable, programmable building blocks that enable risk intermediation at any scale. Consider what this means in practice:

Tokenized money — regulated stablecoins, tokenized deposits, or composites that combine these — acts as the base settlement asset: programmable, composable, operating 24/7/365 across major currency pairs. End users experience fast finality; behind the scenes, financial intermediaries dynamically batch and settle the compressed residual amounts after netting.

A tokenized repurchase agreement is a standardized short-term lending instrument that can be executed at any notional size, settled atomically, and priced dynamically based on collateral quality and term. Today, repo is a bilateral interbank market with minimum ticket sizes in the hundreds of millions. Tokenized, it becomes a cloud service available to any participant at whatever size the economics support. A tokenized FX swap can be decomposed into spot and forward legs, priced against real-time cross-currency netting opportunities, and executed atomically across settlement domains — composable building blocks for cross-currency optimization at any scale. The same logic applies to tokenized overnight indexed swaps (pricing the time value of settlement acceleration), commercial paper, and certificates of deposit (providing short-duration credit intermediation for Tier 2 liquidity providers).

Each of these instruments addresses a specific risk dimension — duration, currency, interest rate, credit — through a standardized interface at any notional size. They are the cloud services of the settlement layer: individually useful, but transformative when composed.

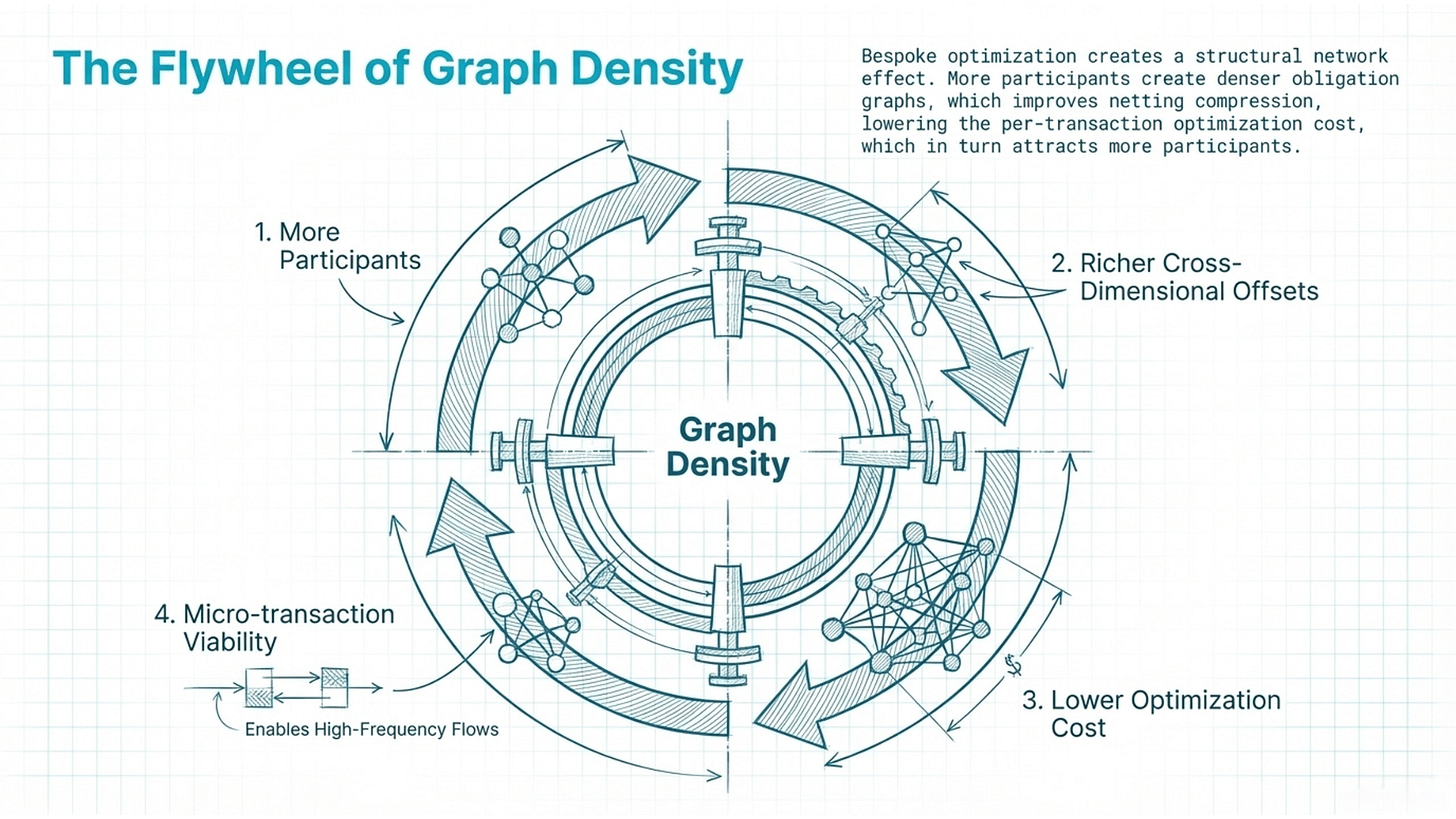

The adoption dynamic. The flywheel is self-reinforcing: each additional participant increases flow density, which improves netting compression, which reduces per-transaction cost, which attracts more participants. And because each obligation is optimized individually rather than batched generically, the system gets smarter as it gets bigger — more flow means more cross-dimensional offsetting opportunities, not just more volume through the same static pipes. The initial incentive to adopt is the concatenation, fragmentation, and globalization pain that processors and platforms already experience. The deeper incentive — tokenized money market intermediation, micro-transaction viability — unlocks as the network matures.

And just as cloud computing didn’t require enterprises to rip out their data centers on day one, cloud clearing can coexist with existing rails. The formalized primitives abstract over Visa, ACH, and Fedwire without replacing them. The tokenized instruments layer on top of existing money markets without displacing them. Gradual adoption, expanding the optimization boundary incrementally, is both technically feasible and strategically rational.

6. A Three-Tier Market Microstructure for Commerce

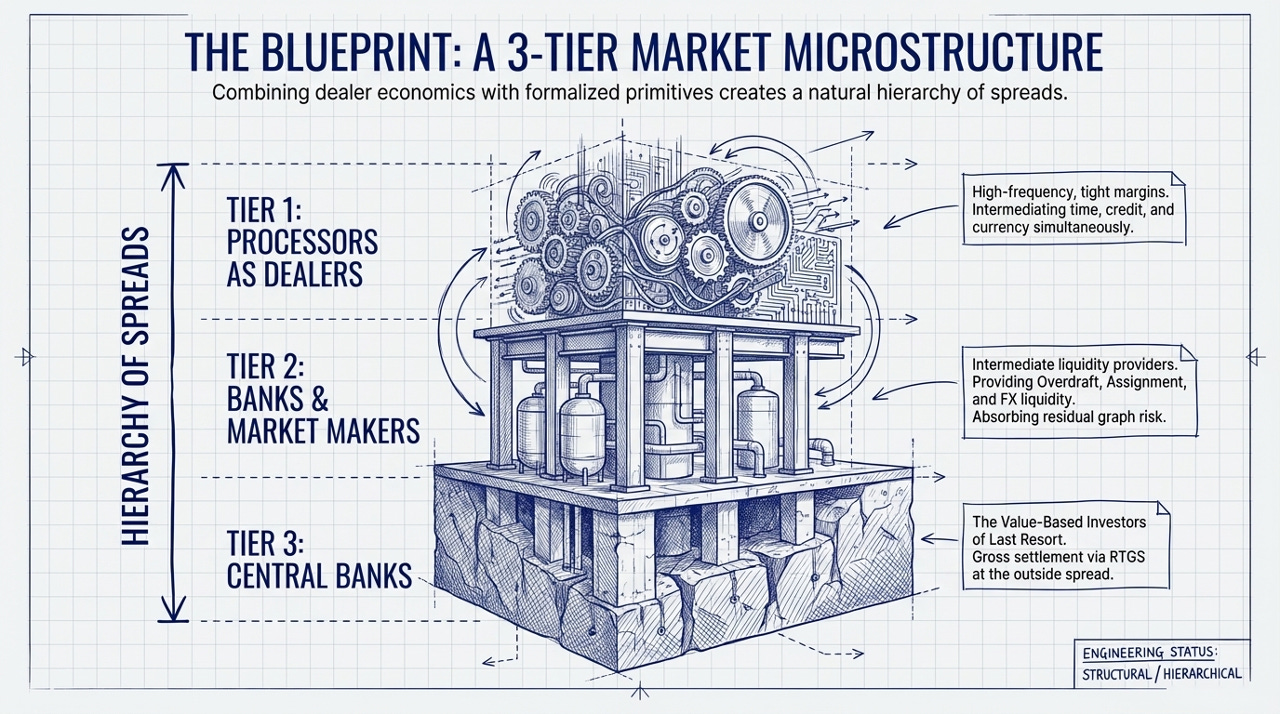

Combining Treynor’s dealer model, Mehrling’s hierarchy of money, and the structural properties of commercial flow, a three-tier market microstructure for settlement emerges.

Tier 1: Processors as Dealers. Payment processors, platforms, and large marketplaces already perform a dealer function. A processor sits between card-issuer receivables (which take T+1 to T+3 to settle) and merchant/platform payouts (which demand instant or near-instant disbursement). The processor buys receivables at a discount — paying the merchant now, less a processing fee — and collects the full settlement from the card network later. The spread is the processor’s revenue. The float — receivables purchased but not yet settled — is the processor’s inventory.

This is exactly Treynor’s model: intermediation between time-sensitive transactors, spread revenue, inventory risk, and layoff to deeper capital when the position exceeds tolerance.

But processors do more than time intermediation. By aggregating flows across a diverse merchant base — startups, small businesses, platforms, enterprises — they provide implicit credit risk intermediation. The processor knows each merchant’s volume history, chargeback rates, and payout patterns. When it offers instant payouts, it is making a credit judgment about merchant reliability, backed by its aggregate portfolio. It is also providing implicit FX intermediation for cross-border merchants, converting currencies at a spread that reflects its own hedging costs and flow imbalances.

In the cloud clearing model, processors operate at the inside spread: high frequency, tight margins, limited capital commitment, intermediating across time value, credit risk, and currency exposure simultaneously. The formalized primitives don’t ask processors to do something new — they give processors better tools for what they already do, with explicit pricing across all dimensions rather than bundled, opaque fees.

Tier 2: Banks and Market Makers as Intermediate Liquidity Providers. Between the processor-as-dealer and the ultimate settlement backstop, a second tier of intermediation serves a function analogous to Treynor’s value-based investors. These are capitalized institutions — banks, market makers, asset managers, specialized liquidity providers — that inject liquidity at specific points in the obligation graph through formalized mechanisms.

A bank might provide overdraft: lending against a participant’s position in the obligation graph to bridge a timing gap between inflows and outflows. This is the settlement analog of a credit facility, but priced dynamically based on the participant’s real-time graph position rather than a static credit assessment.

A market maker might purchase receivables via assignment: stepping into a payable at a discount, effectively providing factoring services within the protocol. Because the underlying receivable is a zero-alpha commercial obligation — it won’t move against the purchaser — the adverse selection cost is structurally bounded. The market maker’s risk is the credit quality of the obligor and the time to settlement, both of which are knowable at the point of trade.

A bank might provide FX liquidity: swapping currencies to enable cross-currency netting that wouldn’t otherwise clear. If the obligation graph contains offsetting flows in USD and EUR, but they aren’t perfectly balanced, a bank can provide the marginal FX liquidity needed to complete the cycle, earning the spread for its intermediation.

Each of these functions operates at a wider spread and longer time horizon than the processor tier. These participants hold position through settlement cycles, absorb the residual risk that processors cannot, and earn a return for providing the capital depth that makes the system stable. Their willingness to participate is what determines the effective outside spread of the cloud clearing system — and because the underlying flow is zero-alpha, that outside spread can be tighter than in markets where adverse selection is the dominant cost.

Tier 3: Central Banks as Last Resort. At the base of the hierarchy, central banks remain what they have always been: the value-based investors of last resort. When all netting fails, when all commercial intermediation is exhausted, when the obligation graph cannot be compressed further, the residual settles in central bank money — through Fedwire, through the Bank of England’s RTGS, through TARGET2 in the eurozone. This is the outside spread of the global payment system: expensive, slow, but unconditionally reliable.

The three tiers form a natural hierarchy of spreads. Processors earn the tightest spread on the highest frequency. Banks and market makers earn a wider spread on lower frequency, higher capital-commitment intermediation. Central banks provide the ultimate backstop at the widest spread. Each tier’s economics are anchored by the tier below it, just as in Treynor’s original model the dealer’s inside spread is determined by the cost of laying off to the value-based investor.

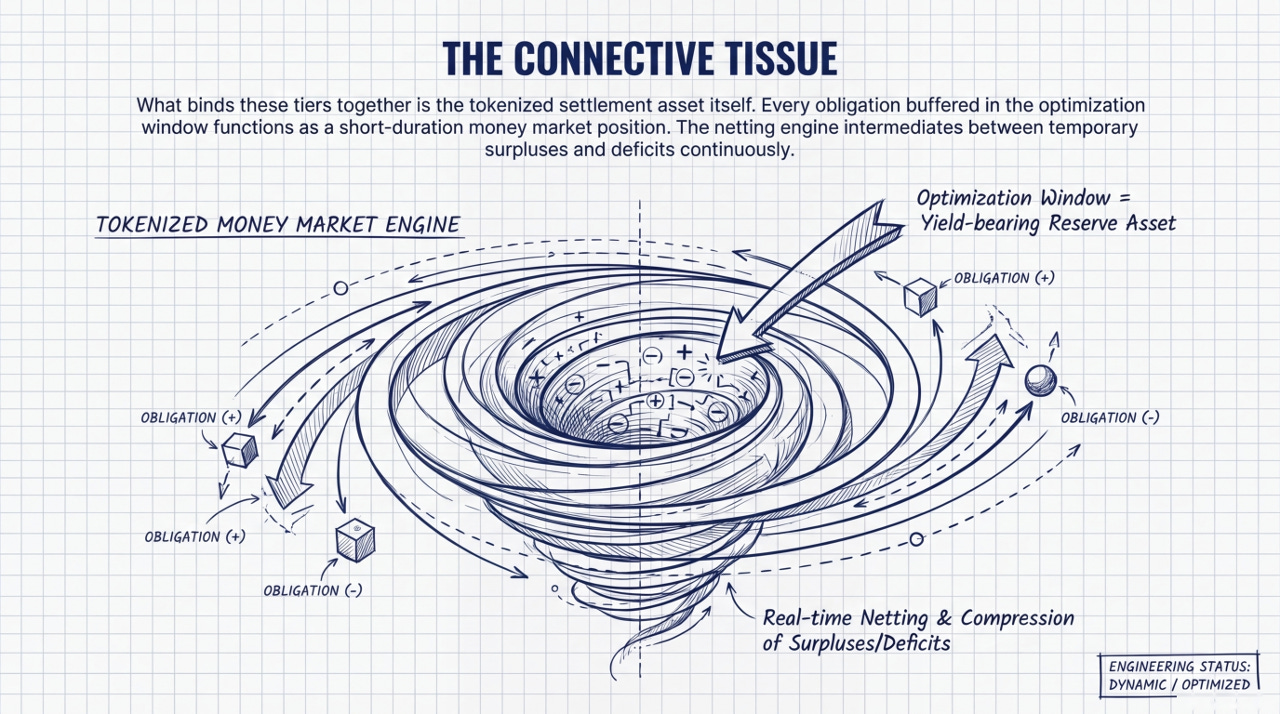

The tokenized money market as connective tissue. What binds these tiers together is the settlement asset itself: a programmable, tokenized instrument backed by regulated deposits and sovereign securities, operating continuously, and yielding a return on reserve assets. Every obligation buffered in the optimization window is, for the duration of that window, a short-duration money market position. The netting engine intermediates between parties with temporary surpluses and temporary deficits — which is exactly what money markets do, just expressed through settlement optimization rather than explicit lending. This is how tokenized money markets enter global commerce — not as a replacement for existing money markets, but as an emergent property of settlement optimization that makes money market economics available to any participant at any transaction size.

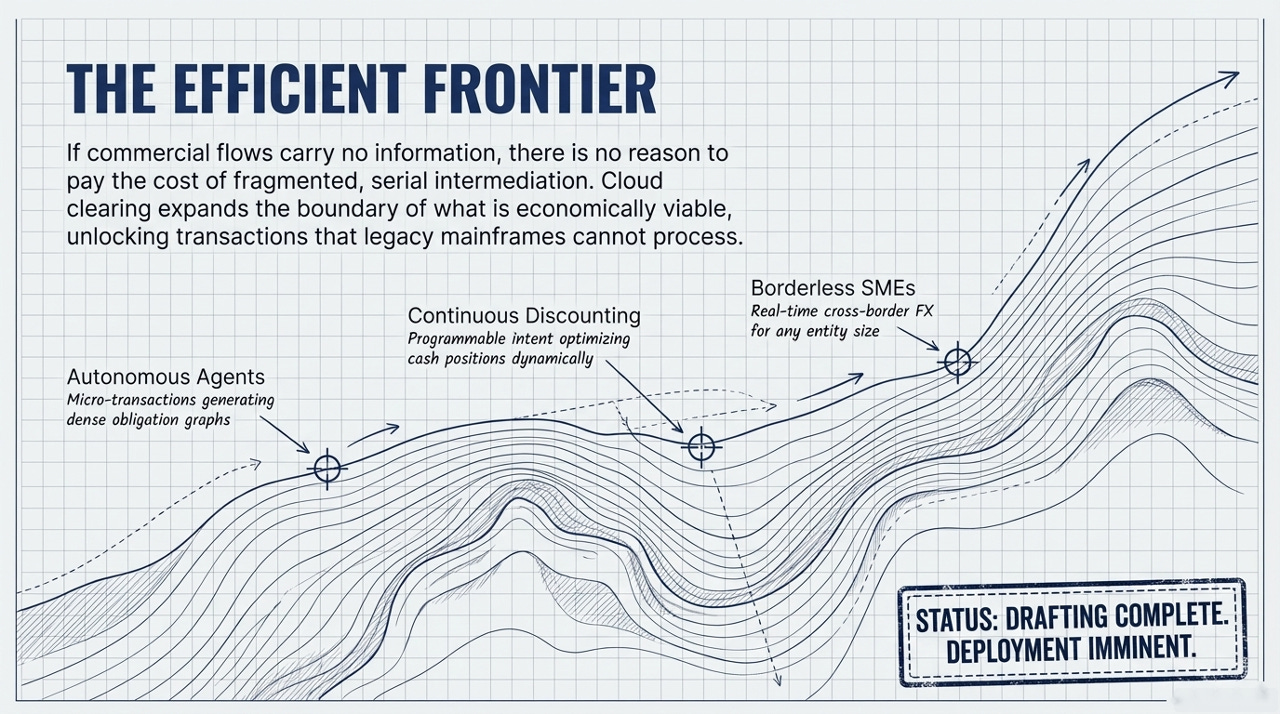

New categories of economic activity. Just as cloud computing created categories of application that couldn’t exist on mainframes, cloud clearing could enable transaction types that cannot exist in mainframe settlement: micro-transactions between autonomous AI agents generating dense obligation graphs, continuous dynamic discounting through the protocol’s intent system, affordable cross-border FX settlement for small businesses, and programmable treasury operations that optimize cash positions continuously rather than in batch cycles— what might be called streaming money, where value flows are managed as continuous signals rather than discrete batch events.

7. What This Framework Does Not Address

Three domains are deliberately out of scope: identity, fraud, and KYC/AML (this framework assumes licensed institutions and established enterprises where identity is bounded); systemic concentration risk (cloud clearing could concentrate critical infrastructure, and protocol design should address this through open standards and distributed governance); and regulatory path dependency (settlement obligations have legal domicile and jurisdictional compliance requirements that the cloud computing analogy underweights — regulatory adaptation, not technology, will likely be the binding constraint on deployment speed).

These are real constraints, not cosmetic ones. But they are constraints on the pace of deployment, not on the direction.

Conclusion

The hierarchy of dealer functions that Treynor identified in securities markets and Mehrling extended to money markets exists in payment settlement today — but fragmented across dozens of purpose-built systems, each optimizing a single dimension, none seeing the whole. Formalizing the primitives, tokenizing the instruments, and virtualizing the clearing function — applying financial compression not within isolated systems but across the full obligation graph — creates the conditions for these fragments to be composed into a unified market microstructure. Zero Alpha — the absence of information content in commercial flows — is what makes this composition possible. It is also what makes it necessary: if the flows carry no information, there is no reason to pay the cost of fragmented, serial, dimension-by-dimension intermediation. The efficient frontier is a single, continuously optimized, multidimensional clearing venue. Cloud clearing is the name for getting there.

References

Treynor, Jack L. “Economics of the Dealer Function.” Financial Analysts Journal 43, No. 6 (November/December 1987): 27-34.

Mehrling, Perry. The New Lombard Street: How the Fed Became the Dealer of Last Resort. Princeton University Press, 2011.

Mehrling, Perry. “Essential Hybridity: A Money View of FX.” Journal of Comparative Economics 41 (2013): 355-363.

Mehrling, Perry. “Economics of Money and Banking.” Coursera / Columbia University, 2012.

Fleischman, Thomas and Dini, Paolo. “Mathematical Foundations for Balancing the Payment System in the Trade Credit Market.” Journal of Risk and Financial Management 14, No. 9 (2021): 452.

Buchman, Ethan et al. “Cycles Protocol Whitepaper.” Informal Systems, 2024. https://cycles.money/whitepaper.pdf

A fascinating look at payments through the lens of financial economics! The parts around transaction costs / information asymmetry has me thinking about where we can turn to the likes of Coase/Alchain/Arrow and von Neumann/Nash to take this even further.